“Mahalo” is Hawaiian for “thank you”. It is also the name that SESAR JU partners have given to their project on how to best introduce automation into air traffic management. As Stefano Bonelli, MAHALO coordinator explains, the choice of name captures both the technical description and creative spirit behind a project, which aims to make AI-enabled agents “team-players” that are capable of cooperating fully with air traffic controllers rather than just “black boxes” that output solutions when and where necessary.

Why do we need to consider automation in air traffic management?

Automation has revolutionised many industries; think self-driving cars, medical diagnosis and speech recognition. Now it’s the turn of air traffic management (ATM)! Automation based on machine learning is capable of learning from new data, detecting patterns that a human can’t and considering thousands of variables at the same time to reach a solution. This is why it needs to be incorporated into managing air traffic, a task which has become increasingly complex and labour intensive. MAHALO is supporting efforts to move progressively towards an advanced automation in ATM, addressing both technological aspects together while keeping the human in the loop, (HITL) in line with the timeline outlined in EASA’s Artificial Intelligence Roadmap 1.0.

What exactly is MAHALO you investigating?

In the emerging age of machine learning (ML), we have to ask ourselves; what kind of automation do we want to develop for air traffic control (ATC), which is heavily reliant on human intervention? Should automation match human behaviour (i.e. conformal) or should it simply be understandable to the human (i.e. transparent)? What trade-offs exist, in terms of controller trust, acceptance, and performance, when introducing automation into the system?

To answer these questions, MAHALO is:

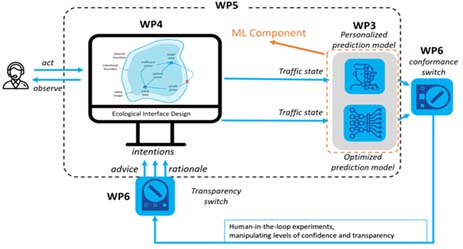

- Developing an individually-tuned hybrid ML system comprised of layered deep learning and reinforcement models, trained on controller performance (context-specific solutions), strategies (eye tracking), and physiological data, which learns to solve ATC conflicts;

- Coupling this with an enhanced en-route traffic conflict detection and resolution (CD&R) prototype display to present machine rationale with regards to ML output;

- Evaluating in real-time simulations of the relative impact of ML conformance, transparency, and traffic complexity, on controller understanding, trust, acceptance, workload, and performance;

- Defining a framework to guide design of future AI systems, including guidance on the effects of conformance, transparency, complexity, and non-nominal conditions.

Figure 1: Overview of MAHALO project concept

There is a lot of work being done AI/ML in ATM – what sets your project apart?

The project is trying to make the AI agents more of a team-player that is capable of cooperating fully with the human controller rather than just a “black box” that outputs solutions when and where necessary. The MAHALO partners are using both a novel approach to interfaces in ATM (SSD - solution space diagram as input to a ML agent) and a mixture of conformal and optimal automation to test the best combination and increase acceptance among controllers. In that way, MAHALO considers both accuracy of the automation and controller acceptance. SSD also allows for a shared mental model allowing controllers to evaluate and audit more efficiently the automated solutions.

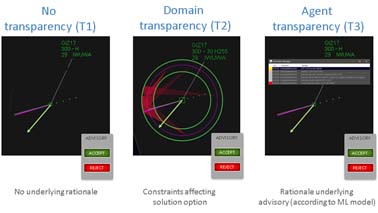

Figure 2: Alternative ways of provide AI explainability to controllers

How difficult is it to introduce AI/ML into ATM? What are the challenges? Why is it so important to do so?

There are both short and long-term challenges. In the short term, we see limitations in current AI models, in terms of accuracy and the ability to meet the safety standards needed in aviation (10^-12 safety). These models also usually require a large amount of data and computation power to train. Furthermore, most AI agents operate as black boxes, making it harder for humans to work with, audit and understand the output from automated solutions. Future AI models must either be transparent enough to allow the controller to “look inside” the inner workings of the system or provide adequate explanations for adequate levels of situation and automation awareness. In the long term, the challenge is with the certification of AI. Currently there is no consensus on how this certification should be done or looked at. Neural networks can have several million trainable parametres, making it unviable to inspect.

Can controllers trust AI?

The MAHALO partners believe that, to be trusted, an AI system should be designed to support the development of an appropriate and calibrated trust attitude. Two AI parameters are believed to impact controllers’ trust in AI: the degree of personalisation (i.e. solves problems similar to the individual controller) and the degree of transparency (i.e. explains why a problem is solved in a specific way). AI transparency, or explainability, can also support the controller's judgment whether trusting it or not.

How could the results of your project be used by the authorities, ANSPs, end users?

The results from MAHALO are expected to provide authorities, air navigation service providers (ANSPs), and end-users with a better understanding of how personalised and transparent AI may impact controllers’ understanding, trust, acceptance and performance. These are important matters for authorities and ANSPs in determining human factor AI design requirements to verify future ATC safety. For end users, results can impact the design of future AI applications and determine digital assistants and colleagues’ behaviour.

What benefits do you hope your project will bring?

The ultimate benefit of this effort is expected to be clear guidance on how ML should be developed, so as to keep the human “in-the-loop”.

This project has received funding from the SESAR Joint Undertaking under the European Union's Horizon 2020 research and innovation programme under grant agreement No 892970